AI is for operators

Why judgment beats execution now

AI is for operators

Everyone’s asking the wrong question about AI. The whole conversation is about replacement. Will it take your job, will it make you obsolete. It’s the wrong frame.

Here’s what’s actually happening: AI is eliminating execution. Not judgment. Not taste. Not the ability to know what’s worth building. Just the mechanical distance between a decision and a result.

I’ve spent 20 years building browsers at Opera. Products used by hundreds of millions of people. The bottleneck was never knowing what to build. It was always how long it took to get from “I have an idea worth testing” to “it’s shipped.” Months of coordination, implementation, iteration, debugging, revision. All execution.

AI is compressing that to near zero.

I feel this personally. When I was finishing university, I almost got depressed, not because I lacked ideas, but because I could see that the things I wanted to build would require either decades of solo effort or teams of hundreds. So I took the managerial path. I built organizations instead of code. And it worked — I don’t regret it! — but something was lost.

These days, for the first time in twenty years, I can feel the joy of creation again. The same feeling I had as a teenager, programming on paper (you know, at school, when just a lesson doesn’t fill up your whole ADHD attention span :p), optimizing assembler by hand, trying to squeeze a shading routine into fewer CPU cycles. AI gave that back to me. I have never felt better about what’s possible.

And when execution disappears, something interesting happens. The only thing that matters is whether you had the right judgment in the first place.

This is not about experience level. I’ve seen brilliant work from people in their first year. Ambition and taste aren’t a function of years on the job. But the gap between what you can see and what you can ship, that grows with experience. The more you know, the more you see what should exist. And the more painful the execution bottleneck becomes.

The people who benefit most from AI are operators. People with hard-won judgment who were always bottlenecked by execution. The senior engineer who sees the right abstraction immediately but used to spend two days implementing it. The strategist who knows the positioning but used to spend a week building the deck. AI doesn’t give these people new ideas. It removes the friction between their ideas and reality. And of course, the world has always benefited from those who don’t know something’s impossible and do it anyway. AI supercharges that too.

Andrej Karpathy wrote this week about building personal knowledge bases with LLMs. What struck me most: the human barely touches the wiki directly. The LLM handles compilation, maintenance, cleanup. All execution. The human’s job is deciding what to look at and what questions to ask.

But this only works if the knowledge base is clean. We spent decades learning that code rots without refactoring. Same applies to your data now. Inconsistent, fragmented knowledge in, garbage reasoning out. Data hygiene is the new code hygiene. The operator’s discipline, knowing what’s noise, what’s signal, what structure the information needs, that’s the factor that impacts AI output more than anything else.

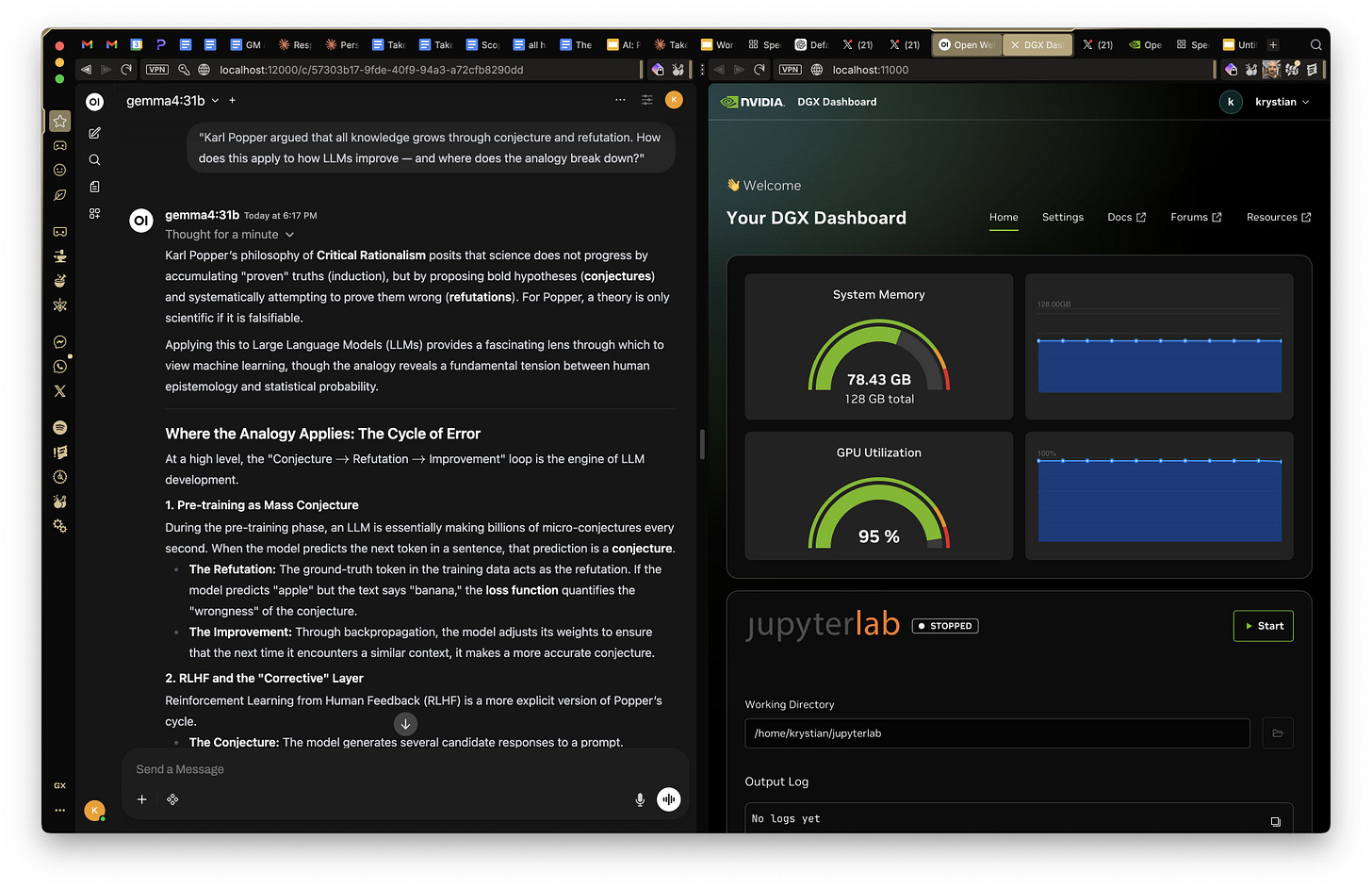

Google released Gemma 4 this week. I downloaded it to my NVIDIA Spark the same afternoon. It’s sitting right on my desk together with Nemotron 3 Nano. They’re fast, genuinely smart, and within 20 minutes I’ll have my Mac Mini running an agent farm using these models through the night, researching agent lifecycles while I sleep. More on that soon. But the point is, time from idea to getting things done shrunk to almost nothing.

This is the lens I apply to everything I build. Not “how do we add AI to this?” but “what happens when execution disappears and the user’s judgment is the only input that matters?”

That’s what I mean by AI for operators. AI that makes you the bottleneck — in the best possible way. Because if your judgment is good, everything downstream is right. If it’s bad, no amount of execution speed saves you.

I should be honest about the limits of my own argument. Saying AI only eliminates execution might be too comfortable. Given the right principles and frameworks, AI can reason, plan, validate, and self-correct. So how much of what we call “judgment” is actually pattern-matching from experience, culture, and education — just execution at a higher level of abstraction? And how much is something else, genuine creativity, whatever that means? I don’t know. I’m not sure anyone does yet.

What I do know is that the people who assume AI can’t touch their thinking will be the last to notice when it starts to. And the people who stay curious about where the line actually is, those are the ones I want to build for.

I’ll be writing here about this thesis as I pressure-test it against the products I’m building, against the industry, against my own assumptions. Some of it will be wrong. As Popper would say, knowledge only grows through conjecture and refutation. I’d rather be wrong in public than right in private.

Hi Krystian. I wonder if there's another gap in the argument. It's in how judgement and taste are acquired.

I believe that people with expertise acquire much of those traits *through* the execution, not just by passively studying stuff. In other words, they have judgement and taste precisely because they *executed* (and reflected upon the process of execution itself - the content of it, not just the *outcomes*). We learn much about the substance of a thing by doing the thing.

This is probably where you and I will disagree. The fact someone produced some brilliant work in the software domain is very low bar, given how short lived software is, and how unexplored the domain is in general.

If AI removes experience of *executing*, it's possible many people will have no other way to acquire taste, or to develop (good) judgement.

I can provide maybe a distant analogue of this - consider the difference in people who know history of the world (at least to some reasonable degree of major eras, societies, etc.) - maybe classic liberal arts education - and the impact on civic and moral attitude of this; and then the other side of the spectrum, people who don't know history. Both sides can and do function in the world, sometimes very successfully, but their moral and intellectual depth are often very different, with huge risks I fear sitting mostly in the latter group's approach if they are given much power or responsibility. And somehow when you use the words "taste" and "judgement", exactly this sort of distinction comes to mind.

I worry about people not acquiring broad and deep knowledge very much when using AI to "build". Yes, it builds, but very little human attention is given to any of the details.

Those who come after "us" (current top-productivity-output generation) may get much degraded access to paths to develop the type of judgement or taste you say are useful to become the operator.

I agree these qualities *are* key; knowing what you aim for; inspecting results via some mature frame which includes "taste" - those are very potent things and not everyone has it. With AI, I worry that maybe fewer people will develop it. But I can't be certain.

I'll add another hopeful point to maybe not sound so pessimistic. It seems to me that AI is actualy allowing huge creative flourishing; which I think you also described in your post above - the sense that I can build again, and it's realistic to fit in some extra creative directions which I couldn't without full time effort (and months or more at a time of calendar time). This might be a huge net positive and I hope it stays this way! A new type of global "opportunity" (which on average should really increase the wealth of societies which allow this).

But the question is, for both a) current working population, b) coming-of-age population - do they have reasonable world model and judgement in some domain which will let them continue/start being operators of the kind you describe? Or will AI flatten the outcome space for them because their experience base is too narrow to actually produce _good_ things?

And going forward, will nature of cognition around using AI help people build expertise or will it hinder this process?

(added, sorry, more edits probably incoming) One extra thought: it's possible that you are looking at the positive side of this because you are surrounded by people who are clever or creative enough to build new things. But maybe majority of humans in knowledge work aren't like that. They don't have the capacity to be operators. For them, it might actually be a disaster if they can't adapt. Can they? No idea. Do I believe many folks could be much more creative if given support / tools (AI :), opportunity? You bet! But society works on many layers, and the net results might be a negative for a large swaths of people who aren't as quick to adapt as you and other operators :) are. I'm not trying to single *you* out, I just mean the general category of skilled professionals with unusually high curiosity and intellectual capacity.