Are juniors screwed?

The ladder changed, not the climb

My first post argued that AI eliminates execution and makes judgment the only thing that matters. The obvious follow-up question: if that’s true, how does anyone develop judgment in the first place?

Because here’s the uncomfortable part. Before AI, you became an operator by doing execution work for years. You wrote the code, built the decks, ran the analyses, made the mistakes, learned the patterns. Execution was the training ground for judgment. It was slow and often painful, but it worked.

That layer is disappearing.

Which creates a real paradox: companies want operators, but the traditional path to becoming one is being pulled out from under people’s feet. If AI handles the execution, where do juniors learn?

I care about this question for two reasons. First, I remember being junior. I was arrogant, probably stupid in many ways, but I was curious, ambitious, and cared about producing quality work. And that came before I had any real experience. I was doing heavy lifting in assembler and 3D graphics, not because someone told me to, but because I wanted to see if I could squeeze a few more cycles out of a shading routine (and was lucky to have a 56kbps modem and access to hornet ftp). Nobody taught me taste. I developed it by caring obsessively about whether the output was good or bad.

Second, my son is heading to university soon. The world he’s entering is completely different from the one I entered. This isn’t abstract for me.

That instinct to care doesn’t go away because AI exists. But the path changes.

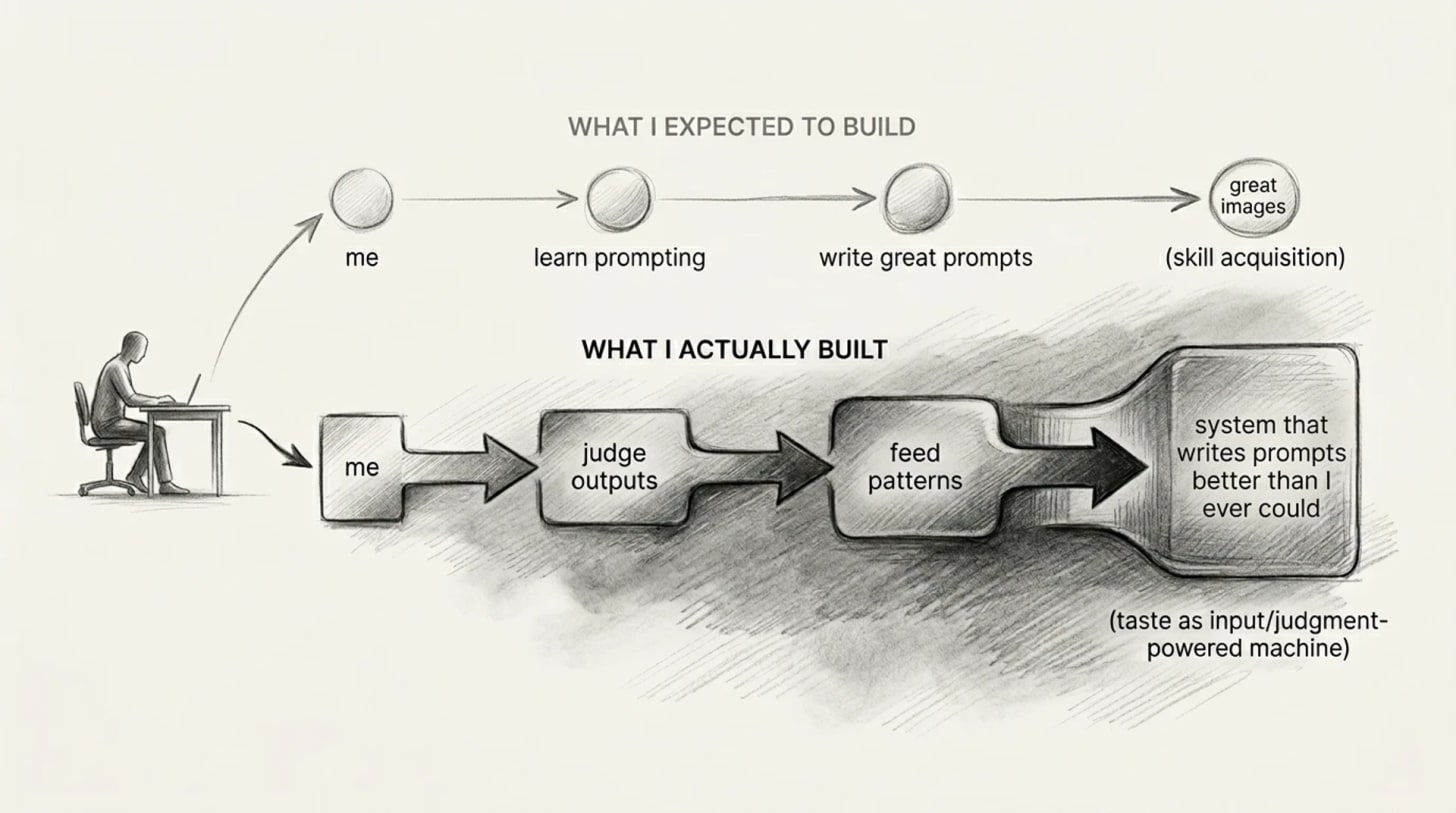

The old path was linear: do tasks, learn patterns, build judgment, become operator. You couldn’t skip steps. The execution work was the curriculum.

The new path is different. You use AI to handle execution, then spend your time reviewing and correcting the output. Did the AI make the right architectural choice? Is this positioning actually sharp or just smooth? Does this code solve the real problem or just the stated one? The skill shifts from doing to evaluating.

This is actually faster, if you approach it right. A junior today can see more outputs, compare more approaches, and test more assumptions in a week than I could in a year of manual work. The iteration cycle compresses. Taste can develop faster when you have more material to judge.

Here’s a real example. I was recently working on a project that needed AI-generated images with a consistent visual style across dozens of outputs. This is a project I work on in the evenings, by the way. Not a browser ;-). Just something I wanted to build.

I started the traditional way, by researching how other people build image prompts, trying to understand how different models respond to different instructions. It was painfully slow.

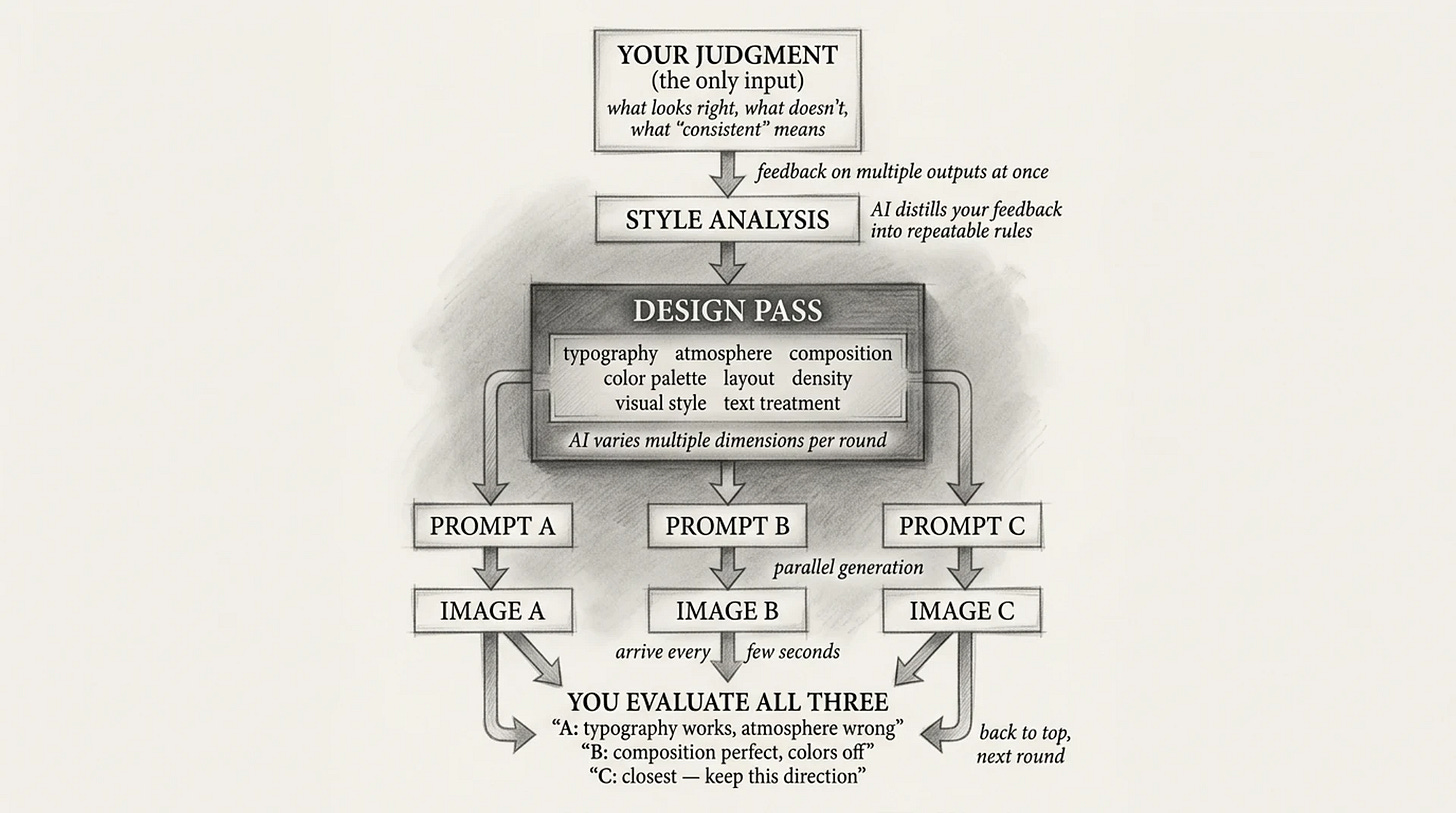

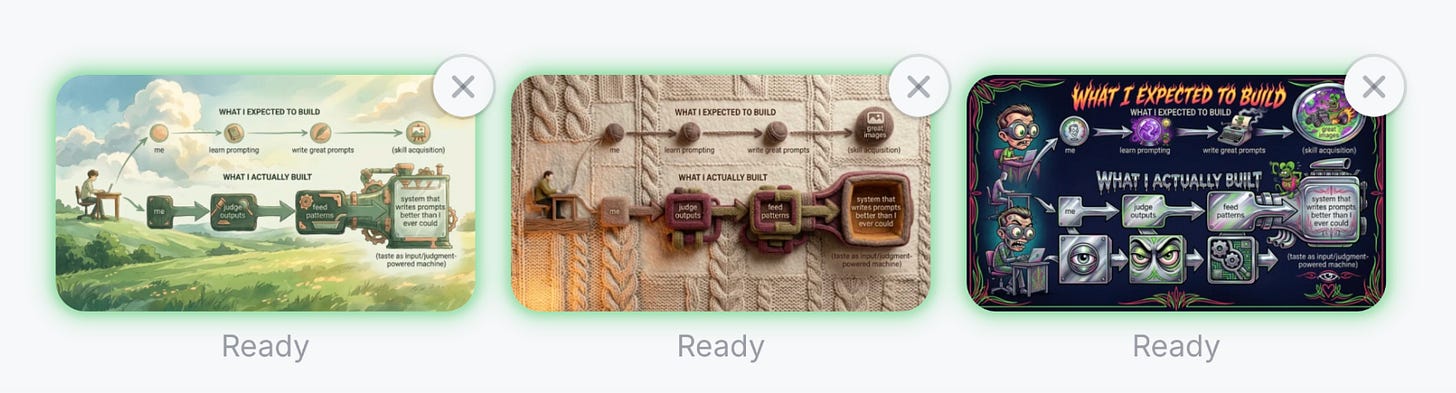

So I changed the approach. I set up a feedback loop: AI generated prompts, I evaluated the images as they came in, one every few seconds, and just gave quick feedback. This is good. That’s wrong. The texture works but the composition doesn’t. I noticed patterns in what made outputs consistent and fed those observations back. Within an hour, burning through an enormous amount of tokens, I’d compressed what would have been weeks of manual experimentation.

But here's what surprised me. The result wasn't what I expected. I didn't end up understanding how to write great image prompts myself. Instead, I built a system that generates them better than I ever could.

My judgment was the input — what looks right, what doesn't, what "consistent" actually means in context. The AI handled everything else.

That’s the operator pattern in miniature. You don’t become good at execution. You build systems that make your execution irrelevant. Your taste is the only thing that can’t be automated out of the loop.

And of course someone will read this and say: once your taste is extracted into the system, you’re no longer needed. Fair objection.

All I can say is what I observe right now: the system I built only works because I keep feeding it new judgment. New styles, new contexts, new “that’s wrong and here’s why.” The moment I stop, it stops improving, or worse - it drifts away. Whether that stays true is one of the most interesting open questions in AI. I’m betting it will, but I’m honest enough to say that’s a bet, not a proof.

But there’s a trap, and it’s not just for juniors. Anyone who just accepts what the AI gives them learns nothing. You become a prompt-typist, not an operator. The AI did the work, you shipped the result, and you have no idea why it was good or bad. You skipped the judgment part entirely. This is everyone’s problem.

The people who win are the ones who obsess over why. Why is this output good? Why is that one garbage? What would I change and why? They treat AI output the way you treat KV cache optimization - as something to evaluate, challenge, and squeeze until it’s actually right. Not something to accept.

The people who lose are the ones who confuse speed with learning. They ship more but understand less. And when the AI gets something wrong in a way that matters, they can’t catch it.

A small habit worth trying. Every time you review an AI output, stop and think why it's good or why it's bad. Best even - write it down. Not for anyone else, for yourself. Keep it as an MD file. Try this for two weeks and my bet is it will accelerate your taste development faster than months of execution work. You're training your judgment on every output instead of every task. Inspired by a Haystack Method from Rohit Bhargava's Non-Obvious; just applied to AI.

I should be honest: I don’t know how this plays out at scale. We’re running a massive experiment on professional development and nobody knows the results yet.

But I’m actually really optimistic. Boris Cherny, who built Claude Code at Anthropic, has a parable I love. He compared AI coding to the Gutenberg Press. Before Gutenberg, scribes controlled text. The printing press eliminated scribes. But scribes were never the writers. Gutenberg enabled millions more people to read and write, and that became fundamental to schooling, to work, to culture. It made it possible for far more people to become creators - writing books that others actually wanted to read.

The same thing is happening now. The fact that everyone will be able to code doesn’t mean everyone will build great products. But it means we’ll all get new powers. You could become proficient in areas where it used to take years to acquire a skill. The path gets shorter. The barrier drops. Will the curious people lead the way, or will only the paranoid survive? Time will tell. I’m betting on both.

Nice food for thought! You mentioned that, “The fact that everyone will be able to code doesn’t mean everyone will build great products.” and I am thinking that it’s really easy now to drown in this huge amount of products and apps. Some of them could be useful, but no one will know about them because now everyone positions themselves as an expert and produces a lot of non-useful things.

My question is: how do you find the right product among such a huge number of apps and tools? How do you recognize something that’s actually worth using?