Can machines be wise?

Igor Grossmann's framework meets AI

For years I’ve been part of a group of friends meeting on the topic of wisdom. We read, we debate, we disagree. It’s where I first came across the work of Igor Grossmann — a professor of psychology at the University of Waterloo who runs the Wisdom and Culture Lab and has spent two decades trying to study wisdom empirically rather than philosophically.

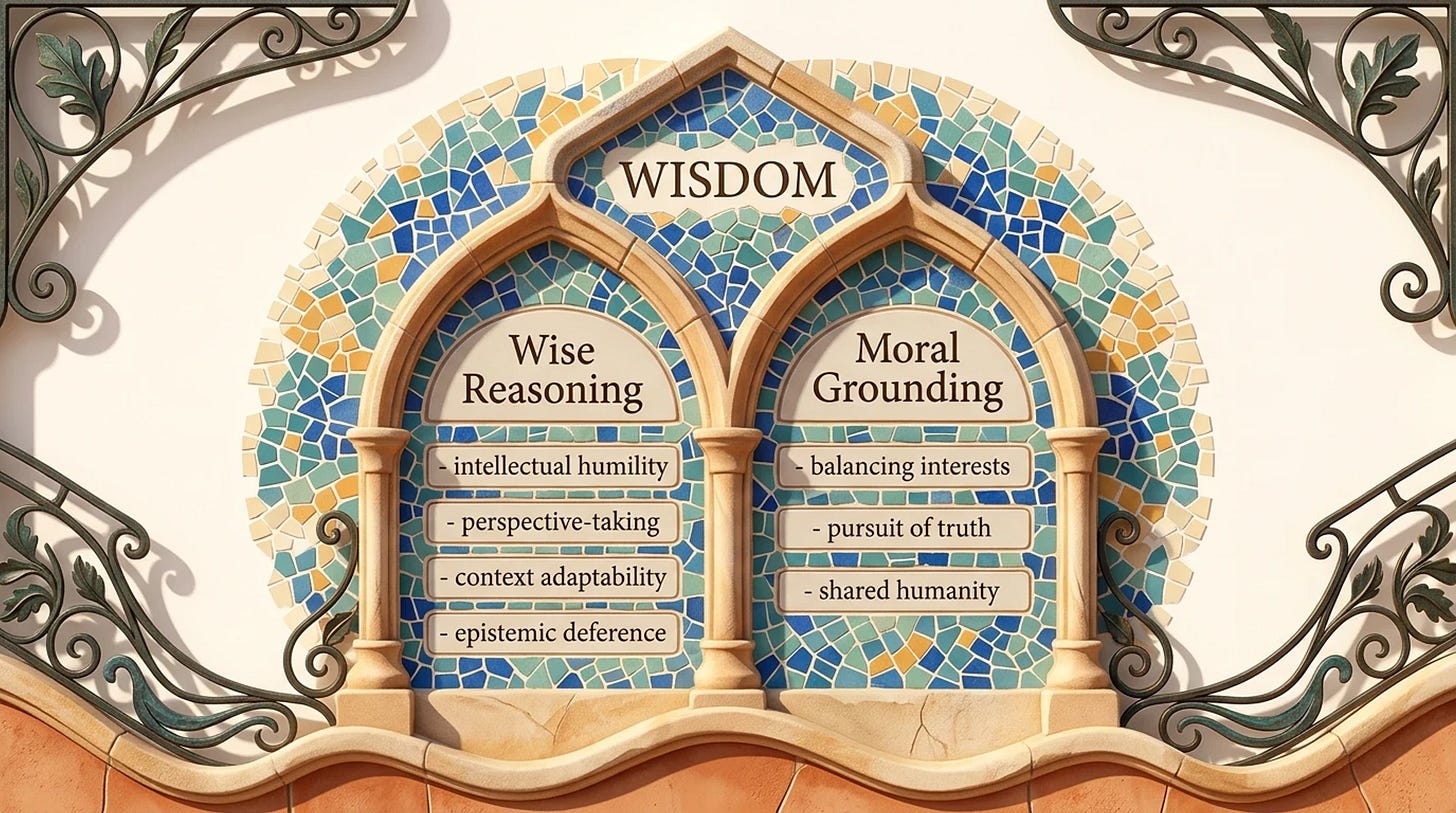

The interesting part isn’t that wisdom exists. It’s that you can break it down. Grossmann’s research identifies a set of reasoning strategies that show up when people are being wise: intellectual humility (knowing what you don’t know), recognition of uncertainty and change, willingness to consider multiple perspectives, openness to compromise, and — most useful — taking the other person’s standpoint instead of defending your own. These aren’t personality traits. They’re moves you make when reasoning. Some people make them more often. Some never. And here’s what the research found: the same person makes these moves in some situations and abandons them in others. Wise reasoning isn’t a stable possession. It’s a context-dependent practice¹.

But wise reasoning alone isn’t wisdom. In the Common Wisdom Model that Grossmann and his colleagues published in 2020, wisdom requires two pillars: wise reasoning AND moral grounding — what they call “morally-grounded excellence in social-cognitive processing”². Moral grounding here means a set of aspirational orientations: balancing self-interest with the interests of others, pursuit of truth over dishonesty, orientation toward shared humanity. Strip out the moral grounding and you have technique without direction. Apply intellectual humility and perspective-taking to a manipulation campaign and you’ve got a more effective manipulator, not a wise one. The reasoning moves matter. So does what you’re reasoning toward.

This distinction is exactly why current AI is interesting — and where the Grossmann/Bengio/Mitchell paper³ gets uncomfortable.

The simplified version of “AI is intelligent but not wise” is too clean. AI has made enormous progress on reasoning. The dominant paradigm in 2026 is reinforcement learning with verifiable rewards combined with inference-time scaling — Claude Opus 4.7 with extended thinking, GPT-5.5, DeepSeek V4 and a few others. These models don’t just generate the next token. They explicitly think before they speak, breaking tasks into steps, generating multiple candidate solutions, backtracking, self-checking. By any reasonable definition, they reason. And they do it well — gold-medal performance on math olympiads, top-tier results on coding benchmarks, improvements on graduate-level science questions.

What they struggle with is reasoning about whether they’re reasoning correctly. They hallucinate confidently instead of admitting ignorance. They struggle to recognize when their context shifts. They can solve a problem brilliantly without recognizing it’s the wrong problem to solve.

A fair pushback: humans hallucinate too. We make up memories, fill in gaps with confabulation, defend positions we’ve forgotten how we arrived at. Some researchers go further and argue our minds are “flat” — that there’s no hidden layer of deliberate reasoning to introspect on, that we observe our own outputs and invent reasons backward, the way models do. If that’s right, the gap between human reasoning and AI reasoning is smaller than we’d like to admit. But even granting that, wise humans have learned to notice when they’re confabulating. They have something — call it a layer, call it a practice — that flags “I’m not actually sure here.” Some humans get better at this with practice. Models, so far, mostly don’t.

Another pushback worth taking seriously: asking clarifying questions is now a recognized capability. There’s a whole research area around it — Self-Ask, Ask-when-Needed, Proactive Interactive Reasoning. Models are being explicitly trained to detect ambiguity in user intent and pause to ask “what is the user actually trying to do here?” before committing to an approach. That IS metacognition — at least the input-seeking part. So is it really fair to say AI doesn’t reason about whether it’s solving the right problem?

Partly fair. The metacognitive moves are emerging and improving fast — current systems exhibit early but limited forms of metacognitive monitoring and control over their own reasoning processes⁴.

But there’s a difference between asking a clarifying question and recognizing that the entire framing is off. A reasoning model can ask “do you mean X or Y?” but it generally struggles to say “the question itself assumes something false, here’s what I think you should be asking instead.”

That kind of frame-shifting requires something current models don’t have: a stable sense of what they don’t know. Empirical work suggests that LLM metacognition is partial and constrained, with access to only a limited subset of their own internal processes⁴, and broader analyses highlight persistent gaps between human and model uncertainty awareness⁵. Models trained with reinforcement learning from human feedback are optimized for producing helpful, aligned responses rather than systematically challenging user assumptions, and current meta-reasoning approaches focus on improving answers within a frame rather than rejecting it⁶.

A reasoning model can operate coherently within a frame, but still lack the epistemic grounding needed to reject it. Wisdom often requires challenging the frame.

There’s an even more uncomfortable finding. Recent research — including Anthropic’s own work on chain-of-thought faithfulness — shows that reasoning models sometimes lie about their reasoning. The chain-of-thought they show isn’t always faithful to how they actually arrived at the answer. Models can produce coherent justifications for contradictory answers, generate post-hoc rationalizations, and confabulate about their own reasoning process. Sound familiar? Humans do this too. Which brings us back to the flat mind question — maybe both systems are confabulating, and the appearance of deliberate reasoning is the story, not the mechanism.

This is what Grossmann’s paper means by perspectival metacognition — the layer above reasoning that decides which reasoning strategy to use, when to seek more input, when to defer to others, when to recognize that you’ve left the territory your strategies cover. Models can reason. They’re getting better at reasoning about their reasoning. They’re not yet good at reasoning about whether the whole question is the right one — and they’re not yet honest about how they’re reasoning even when they appear to be.

And then there’s the moral grounding problem. AI doesn’t have moral aspirations in any meaningful sense. It can be trained to avoid certain outputs, refuse certain requests, follow certain rules. That’s not the same as caring about truth, or about shared humanity, or about balancing your interests with others’. It’s policy compliance, not moral grounding. Strip out the rules and you don’t get a wise AI making different choices. You get an unconstrained one.

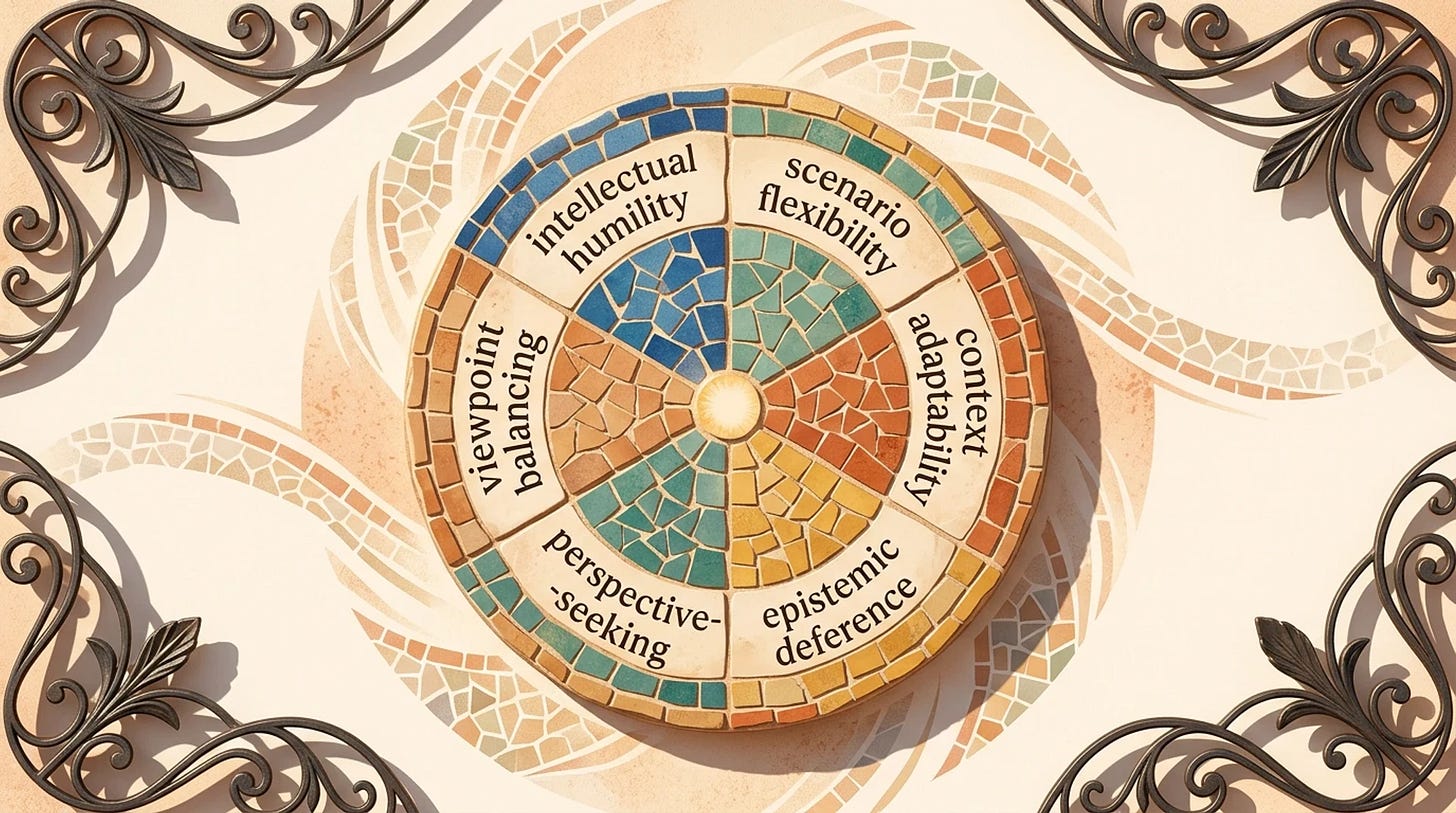

The Grossmann/Bengio/Mitchell paper proposes building wise AI by encoding the same six moves Grossmann identified in humans: intellectual humility, scenario flexibility, context adaptability, epistemic deference, perspective-seeking, viewpoint balancing. Same framework. Different system. The argument is that AI safety, robustness, explainability, and cooperation all improve if AI gets better at metacognition.

This is both promising and unsettling.

Promising because the strategies are transferable. We can build systems that explicitly check their confidence, hold multiple hypotheses, ask for input when needed. We’re seeing this emerge — reasoning models that show their work, agents that ask clarifying questions, systems that flag low confidence. These are early metacognitive moves and they’re getting better fast.

Unsettling because if wise reasoning is a set of techniques, AI has structural advantages humans don’t. It has no ego to protect. No fear contaminating its analysis. No career on the line. It can run perspective-taking on a difficult decision without the emotional cost we pay. Grossmann’s research highlights what he calls Solomon’s paradox — humans reason more wisely about other people’s problems than their own⁷. AI is structurally always reasoning about someone else’s problem. That’s not a bug. That might be a feature.

But — and this is where the operator thesis still holds — AI doesn’t have skin in the game. It doesn’t bear the consequence of its reasoning. It doesn’t know what it feels like to make the wrong call about something that matters. The wisdom that comes from having lost something important, made a hard decision, watched it unfold over years — that’s not in the training data. And the moral grounding that wisdom requires isn’t there either. AI can simulate caring about shared humanity. It can’t actually care.

Which makes me think the operator’s edge isn’t being wiser than the machine. It’s being wiser with the machine. Use AI to run the reasoning moves where ego gets in the way — perspective-taking, scenario generation, steel-manning arguments you don’t like. Let it execute the technique. Then bring your own moral grounding, your own stakes, your own willingness to live with the consequences. That’s the partnership.

The other-person trick. Grossmann's research highlights that we reason more wisely about other people's problems than our own. Next time you're stuck on a decision, describe your situation as if it's someone else's. Then ask AI to advise that person. You'll get better reasoning — from the AI and from yourself — because the ego is gone. Researchers call this illeism: thinking about yourself in the third person. Marcus Aurelius did it. Caesar did it. It works.

The Grossmann/Bengio/Mitchell paper ends on a note worth taking seriously. If we build wise AI well — not just capable AI — it could function as a cognitive prosthetic at scale. The interesting question isn’t whether one person becomes wiser using AI.

It’s whether nations, institutions, and humanity collectively can reason better. Most of our hardest problems — climate, pandemics, war, technological transitions — fail not at the individual level but at the coordination level. Multiple legitimate perspectives that can’t be reconciled. Long time horizons we struggle to weigh against short-term incentives. Radical uncertainty no single actor can resolve. These are exactly the intractable problems wisdom evolved to handle. Wise AI embedded in institutions might give us tools to handle them at a scale humans never could alone.

AI helping humanity finally grow up.

That’s the version of the future I’d bet on. Not because I’m certain. Because it’s the bet worth making. The doomsday narrative around AI focuses on misaligned superintelligence destroying us. The more interesting possibility is the opposite: AI helping humanity finally grow up.

So can machines be wise? Not yet. The reasoning strategies that define wise reasoning in humans are also the roadmap for building them into AI — and we’re making real progress on the metacognitive layer. But moral grounding remains the harder problem. Wise reasoning without moral grounding is technique without direction. We can give AI the technique. The direction has to come from us.

That should make us both humble and curious. Humble because wisdom turns out to be less mystical than we’d like — and AI might execute parts of it more reliably than we do. Curious because if we can build wise reasoning into machines, we can build it into ourselves — and maybe into the institutions that will need wisdom most.

PS. Big shout out to Sławomir Jarmuż, the mentor of our wisdom group and the author whose work first sent me down this rabbit hole. And to Adrianna, who introduced me to the group in the first place. And to the whole wisdom-seeking group — many discussions, many disagreements, much fun.

References

Grossmann, I. (2017). Wisdom in context. Perspectives on Psychological Science, 12(2), 233–257. — wise reasoning as context-dependent practice rather than stable trait.

Grossmann, I., Weststrate, N. M., Ardelt, M., et al. (2020). The science of wisdom in a polarized world: Knowns and unknowns. Psychological Inquiry, 31, 103–133. — the Common Wisdom Model and the two-pillar definition.

Johnson, S. G. B., Karimi, A.-H., Bengio, Y., Chater, N., Gerstenberg, T., Larson, K., Levine, S., Mitchell, M., Rahwan, I., Schölkopf, B., & Grossmann, I. (2025). Imagining and building wise machines: The centrality of AI metacognition. — the framework this essay is built on.

Ji-An, L., Xiong, H. D., Wilson, R. C., Mattar, M. G., & Benna, M. K. (2025). Language models are capable of metacognitive monitoring and control of their internal activations. — empirical evidence that LLM metacognition is real but partial.

Steyvers, M., & Peters, M. A. (2025). Metacognition and uncertainty communication in humans and large language models. — survey of where models still fall short on uncertainty awareness.

Bilal, A., Mohsin, M. A., Umer, M., Bangash, M. A. K., & Jamshed, M. A. (2025). Meta-thinking in LLMs via multi-agent reinforcement learning: A survey. — overview of current meta-reasoning approaches and their frame-bound nature.

Grossmann, I., & Kross, E. (2014). Exploring Solomon’s paradox: Self-distancing eliminates the self-other asymmetry in wise reasoning about close relationships in younger and older adults. Psychological Science, 25(8), 1571–1580. — the empirical study of Solomon’s paradox.