What being an operator actually means

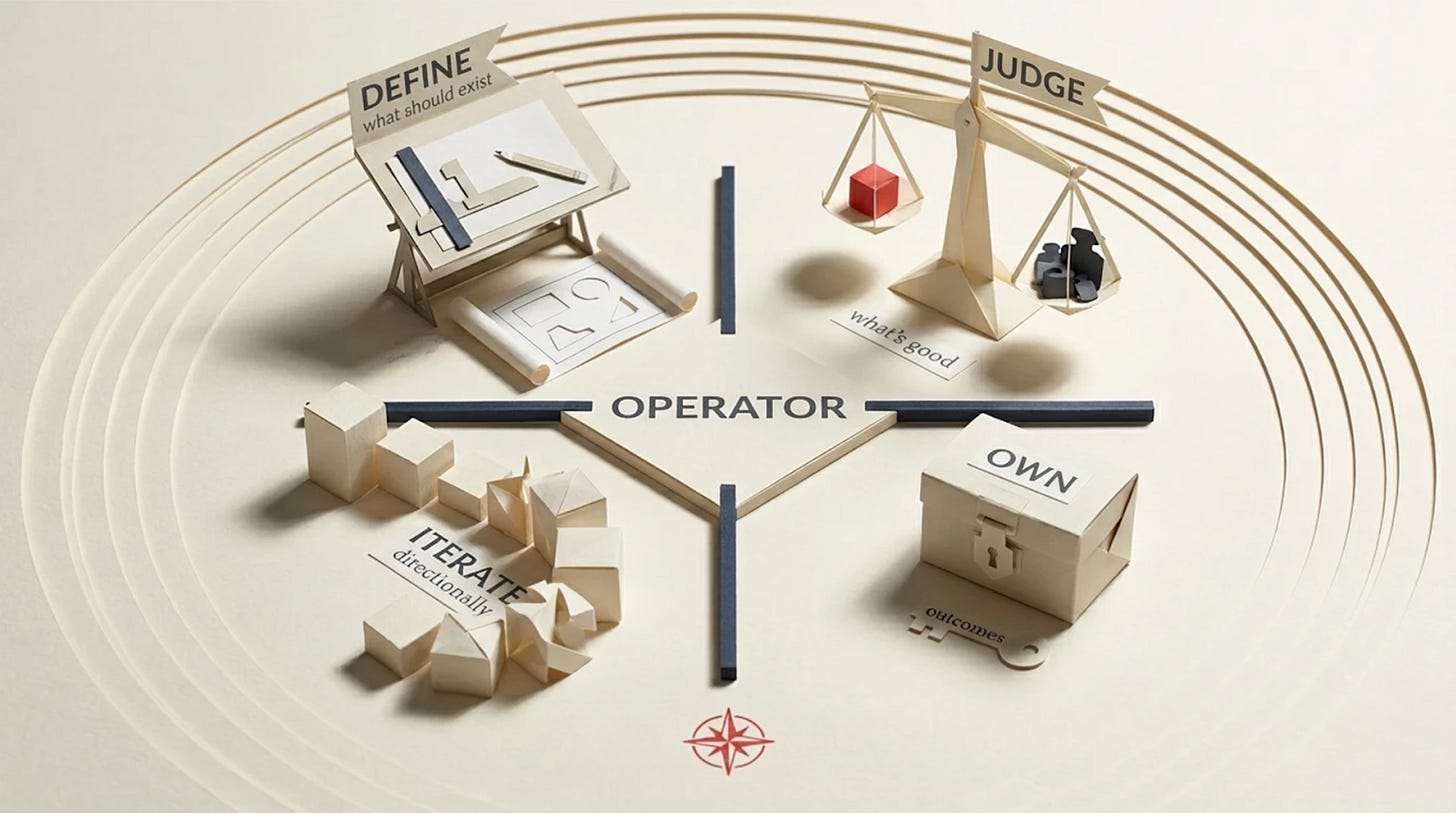

Four things that separate building from prompting

I’ve used the word “operator” twice now without properly defining it. That’s not fair. If I’m going to claim that AI shifts everything to judgment, I should be specific about what judgment actually looks like in practice.

Here are four things. If you do all four, you’re an operator. If you don’t, AI will be a very fast way to produce things nobody needs.

Define what should exist

Not “write code.” Not “build a deck.” Not “design a screen.” Those are execution tasks. The operator question comes before all of that: what’s the actual problem, and is it worth solving?

This is problem selection, framing, and prioritization. It’s the hardest part because it’s where most projects go wrong, and no amount of execution speed fixes a bad starting point. AI can generate a hundred solutions in an afternoon. But a hundred solutions to the wrong problem is worse than no solutions at all, because now you’ve spent time evaluating garbage.

I’ve seen this pattern for 20+ years, including in my own teams. The ones that struggle aren’t the ones that can’t build fast enough. They’re the ones that can’t decide what to build.

Judge quality

AI can generate infinite outputs. Operators decide what’s good, what’s mediocre, and what’s wrong.

This is taste. And taste is weirdly hard to talk about because it sounds subjective, even elitist. But it’s not. It’s pattern recognition built from years of seeing what works and what doesn’t. The designer who looks at a layout and immediately knows the hierarchy is off. The engineer who reads an architecture proposal and sees the scaling problem that will hit in six months. The strategist who reads positioning copy and knows it could be about any company.

I haven’t seen anyone Google their way to taste or prompt their way to it yet. Maybe it’s possible. I’d love to see the evidence. But so far, taste seems to develop the old-fashioned way: by caring, repeatedly, over a long time, about whether the output is actually good.

Iterate directionally

Not just “try again.” It’s tempting to just ask again with slightly different words and hope for something better. I catch myself doing it too. But that’s a slot machine approach — you’re pulling the lever hoping for a different result without changing anything that matters.

There’s a line often attributed to Einstein: you can’t solve a problem with the same thinking that created it. Whether he said it or not, applied to AI it’s exactly right. Rephrasing the same question is useless. The question was wrong, not the answer. Change the question.

Operators iterate directionally. This is wrong because of X, so change the approach to Y. The texture is right but the composition fails, so keep the texture and restructure. The argument is solid but the order kills the pacing, so resequence without losing the logic.

This is feedback loops and course correction. It requires knowing not just that something is wrong, but why it’s wrong and what would make it right. AI is extremely good at executing corrections. But it needs a human who can diagnose the actual problem, not just the symptom.

Own outcomes

Not “I completed the task.” Operators say “this worked or it didn’t, and it’s on me.”

This is the rarest one. Most people in organizations are optimized for task completion. Ship the feature. Deliver the deck. Hit the deadline. Whether the feature actually moved the metric, whether the deck convinced anyone, whether the deadline mattered — that’s someone else’s problem.

Operators close the loop. They care about what happened after the work shipped. Did it work? If not, why? What do I change next time? This is where judgment actually compounds, because you’re building a feedback loop between your decisions and their consequences.

AI can’t do this for you. It has no skin in the game, as Taleb would say. It doesn’t know if the output worked in the real world. It faces no consequences for being wrong. Only you know that, and only if you bother to check.

The test

Here’s a simple way to know if you’re operating or just executing. Ask yourself: if the AI disappeared tomorrow, would you still know what to build and whether it was good? If yes, you’re an operator using AI as leverage. If no, you’re a passenger and the AI is driving.

That’s not a judgment on anyone’s worth. Plenty of brilliant people are early in developing these skills. But knowing the difference matters, because the people who think they’re operating when they’re actually just prompting are the most vulnerable ones in the room.

Before you prompt, answer three things. What problem am I solving? How will I know if the output is good? What does "wrong" look like? Write it down - one line each. Takes 30 seconds. Sounds obvious. Try it - most people discover they can't answer the second one without thinking for ten minutes. That's the gap between prompting and operating. AI is very good at executing wishes. The results vary.

Bonus: once you have your three answers, paste them twice inside your prompts. Sounds silly, but repeating your intent in the prompt measurably improves output quality across all major models. Your judgment, stated clearly and repeated, is literally the best prompt engineering there is.